2021. What an interesting year. With the world turned upside down by a pandemic that seemingly had its sights set on...

Bridging Decades: Practical AI Integration with HPE Nonstop Systems

Infrasoft

Bridging Decades: Practical AI Integration with HPE Nonstop Systems

Andrew Price Director of Business Operations, Infrasoft

In a data centre, an HPE Nonstop system processes large volumes of financial transactions reliably and continuously. Elsewhere within the organisation, an analyst may be using a modern AI assistant to explore data and identify patterns of interest. Increasingly, organisations are asking how these two worlds can be connected in a way that is useful, controlled, and operationally sound.

This article describes a practical example of how AI tooling can be integrated with Nonstop systems, not as a replacement for existing applications, but as an additional way to access and analyse information already held on the platform.

The Integration Challenge

We all know that Nonstop systems underpin critical infrastructure including payment networks, exchanges, healthcare platforms, and telecommunications services. Their strength lies in their specialised architecture and long-established operational model. At the same time, that specialisation can make ad hoc data access difficult.

Answering relatively simple questions often requires detailed system knowledge, custom programs, or batch-oriented processes. Extracts may be run overnight, data staged elsewhere, and results analysed after the fact. This works, but it is slow and inflexible when exploratory analysis is required.

A natural question follows: can modern AI tools query Nonstop-resident data directly, without changing existing applications or operational practices?

The Model Context Protocol

The Model Context Protocol (MCP) provides a standard way for AI clients to interact with external systems. Rather than moving data into an AI platform, MCP allows an AI client to request specific information from a system and receive structured responses.

MCP defines how capabilities are described, how requests are made, and how results are returned. It is designed to be extensible and secure, and does not impose requirements on how the underlying system is implemented.

The challenge in a Nonstop environment is not the protocol itself, but providing a clean and reliable bridge between MCP and native Nonstop interfaces.

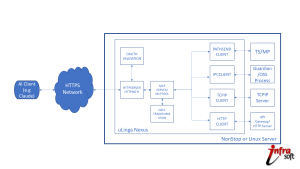

uLinga Nexus as an MCP Server

uLinga Nexus fills this role by acting as an MCP server running on Nonstop systems. It translates between MCP requests from AI clients and the protocols and interfaces already used on Nonstop.

On the AI side, uLinga Nexus implements the MCP server specification and exposes selected Nonstop capabilities in a controlled way. On the Nonstop side, it communicates using established mechanisms such as Pathmon servers, Guardian processes and Enscribe databases. No changes to existing applications are required.

In practical terms, when an AI client requests access to Nonstop applications or data, the request is forwarded to uLinga Nexus, translated into the format required by the backend application, and forwarded to that application. The results are returned in a structured form that the AI client can analyse. The entire exchange completes in real-time, without batch processing or data replication.

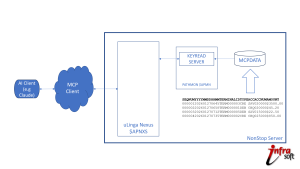

Demonstration: Transaction Analysis

A demonstration of this approach uses a small transaction dataset stored on Nonstop. The data includes timestamps, terminal identifiers, transaction types, account numbers, and amounts.

When queried, the AI client retrieves the data and applies standard analytical techniques to identify patterns of interest. These include duplicate transactions, clusters of rapid withdrawals, and repeated behaviours that may warrant closer review. The value here is not in any single observation, but in the ability to explore the data interactively and follow up on emerging questions.

Importantly, the Nonstop system continues to operate exactly as before. The AI is analysing results returned through defined interfaces, not interfering with production workloads.

Technical Considerations

Several aspects of the integration are worth noting:

- Protocol adaptation: MCP uses modern, web-oriented formats. Nonstop does not. uLinga Nexus handles the translation cleanly in both directions.

- Capability definition: Nonstop programs are exposed declaratively. Adding a new query or operation involves configuration rather than custom integration code.

- Connection management: Pooling, timeouts, and error handling are managed centrally, insulating both sides from implementation detail.

- Security: Authentication, authorisation, and auditing are enforced at a single integration point, consistent with Nonstop operational expectations.

Broader Applications

While transaction analysis is a convenient example, the same approach can be applied more broadly. Operational teams can query logs or performance metrics. Support staff can investigate recent errors without navigating multiple tools. Compliance reporting can be driven by direct questions rather than predefined extracts.

In each case, the emphasis is on access and analysis, not automation or control. The AI assists with understanding data that already exists, using interfaces that are already trusted.

What This Means for Nonstop Environments

For many years, modernisation discussions have tended to frame Nonstop systems as something to be isolated or replaced. This approach offers a third option: retain what works, and extend how it can be used.

By providing a controlled bridge between AI tools and Nonstop systems, organisations can make long-lived data more accessible without compromising reliability or stability. The platform remains unchanged, but its information becomes easier to explore and understand.

uLinga Nexus brings practical AI capabilities to Nonstop systems, while allowing them to continue operating exactly as designed.